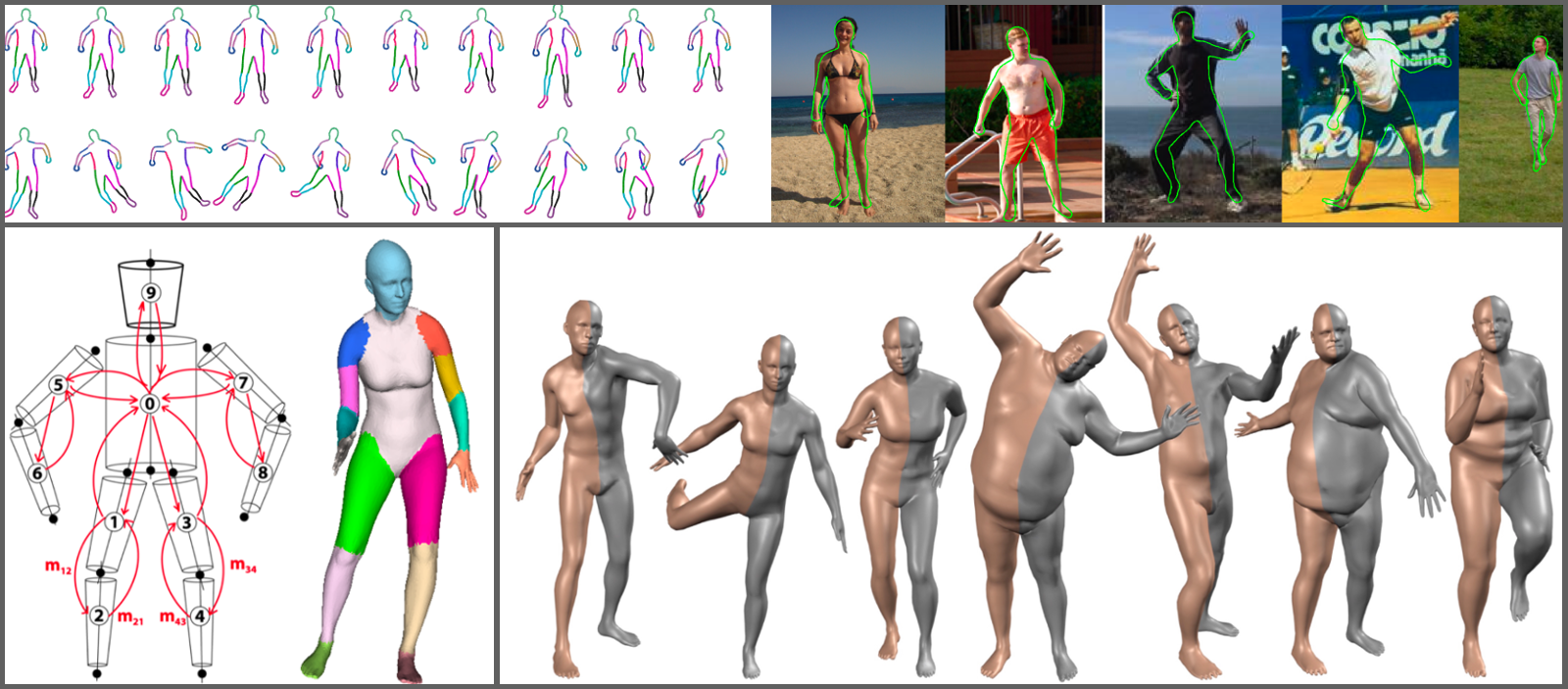

Top left; 2D contour person (CP) model exhibits variation in shape and pose [ ]. Top right: The CP model is optimized to find the best segmentation of person and background. Bottom left: The Stitched Puppet model [ ] has a part-based graphical structure that facilitates inference. Bottom right: The SMPL model [ ] (orange) is highly realistic when compared with ground truth 3D meshes (grey).

In the process of teaching computers how to perceive the world, there is a family of objects that stands out due to its complexity and importance: human bodies. The human body is highly articulated, deforms with kinematic changes, and exhibits large shape variability across subjects while clothes and hairstyles create a large variety of appearances.

We argue that making a computer able to model, perceive and generate human appearance would not only be clearly valuable in terms of practical applications, but would also entail a great step forward in the task of making computers perceive the world around us. This problem has been tackled both in computer vision, with a long tradition of model-based methods for human detection and pose estimation, and graphics, where the ultimate goal is a faithful reproduction of real human bodies.

Our research in human body models is driven towards a convergence between models used in Computer Vision and Computer Graphics: realistic and efficient models that are suitable for parameter inference from data. Modeling bodies and performing inference with them is typically computationally expensive. Our Contour People (CP) model [ ] enables 2D pose inference methods while mantaining realistic body shapes more common to 3D models. The Stitched Puppet (SP) model [ ] taks an alternative approach towards efficient inference by exploiting the composability of human bodies into parts and performing message-passing inference.

Finally, we are working towards modeling realistic virtual humans. To that end, we have developed a series of different 3D body models that can be used for both graphics and vision: BlendSCAPE [ ], Delta [ ], Dyna [ ] and SMPL [ ]. In particular, SMPL is a realistic human body model that retains a simple . SMPL distills thousand of body scans into a 3D statistical model of the human body with state-of-the-art realism (comparable to or better than much more complicated models), real time rendering, and full compatibility with standard animation software.