Capturing the motion of people outside laboratory environments and over long periods is intractable with visual systems. A viable alternative to camera-based MoCap systems is to use IMU-based (Inertial Measurement Units) MoCap systems. These employ IMUs to capture and reconstruct human pose without the need of external visual sensing. The goal is to capture people moving in the wild, performing unscripted actions and natural interactions without constraints.

In this direction we use a NanSense BioMed bundle with a total of 54 sensors, combining an IMU-based body suit, IMU-based gloves and insole pressure sensors. We further use an XSens MVN Awinda system that employs 17 wireless IMU sensors together with ManusVR gloves that employ 2 IMUs and 5 flex sensors.

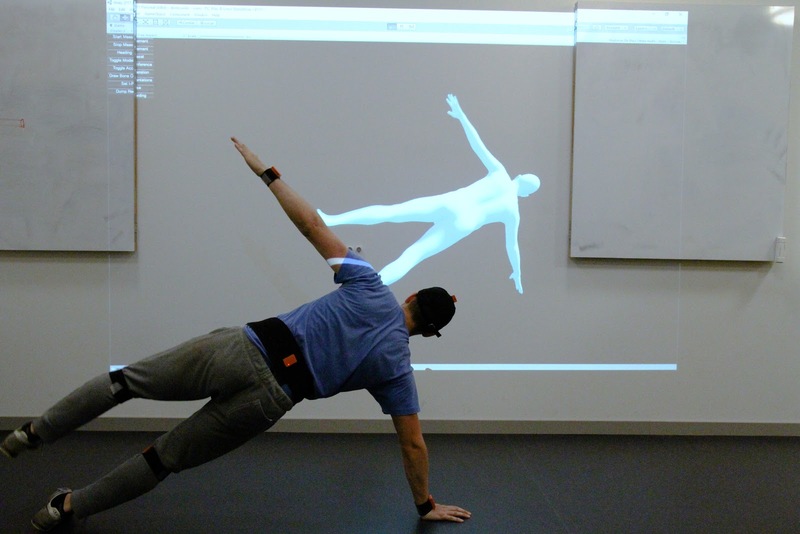

These systems can either capture raw sensor measurements or they can reconstruct 3D skeletons of people moving. However these are not natural representations of people in motion, as real humans have a dense surface that can not be captured with inertial sensors. To account for this we develop state-of-the-art methods to convert the output of such systems into parameters of our meshed statistical body model, SMPL. This richer representation allows to estimate a dense human shape for a more natural motion capture, that also enables reasoning about interactions of people with each other or with the physical world.