Department News

Virtual Reality-Brille erlaubt Blick in eine gesunde Zukunft

- 27 July 2023

Selbstwahrnehmung bei Magersucht

Der Alltag von Menschen mit Magersucht ist geprägt von der Angst vor Gewichtszunahme und den Maßnahmen, eine Zunahme zu verhindern. Entsprechend schwierig ist es für die Betroffenen, die medizinisch dringend geratene Gewichtszunahme zu erreichen.

Meshcapade raises $6M seed round

- 14 June 2023

The spin-off from Perceiving Systems Meshcapade is training foundation models for the analysis and generation of 3D humans

Matrix leads seed round to expand Meshcapade's market-leading AI solutions that transform pictures, videos, text, and sensor data into 3D humans in the SMPL Standard body format. Meshcapade's technology and founding team spun out from the MPI for Intelligent Systems.

The Koenderink Prize at ECCV 2022

- 31 October 2022

From cartoons to science: The Sintel dataset at 10 years

The Sintel optical flow dataset appeared at ECCV 2012. Ten years later, at ECCV 2022, it was awarded the Koenderink Prize for work that has stood the test of time.

Max Planck spin-off Meshcapade wins new startup award

- 28 March 2022

Newly established prize by the Max Planck Society and the Stifterverband aims to promote start-up culture in science

Better decisions, more control: “Best Paper Award” for Tübingen researchers

- 26 June 2021

Two Cyber Valley researchers awarded for best scientific paper at world-renowned conference

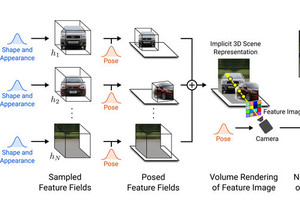

Great success for two AI researchers from the Cyber Valley ecosystem: Michael Niemeyer, PhD student at the Max Planck Institute for Intelligent Systems, and Prof. Dr. Andreas Geiger from the University of Tübingen were honored with the ‘Best Paper Award’ at this year’s Conference on Computer Vision and Pattern Recognition (CVPR) for their paper ‘GIRAFFE: Representing Scenes as Compositional Generative Neural Feature Fields’.

ELLIS PhD Program: Call for Applications

- 10 September 2020

The European Laboratory for Learning and Intelligent Systems offers an interdisciplinary PhD program. The ELLIS PhD program is a key element of the ELLIS initiative and its goal is to foster and educate the best talent in machine learning related research areas by pairing outstanding students with leading academic and industrial researchers in Europe. The program supports excellent PhDs across Europe by giving them access to leading research through boot camps, summer schools and workshops of the ELLIS programs. Every PhD student is supervised by one ELLIS fellow/scholar and one ELLIS member from a different country and conducts a 1 year exchange at the other location.

New video-based approach to 3D motion capture makes virtual avatars more realistic than ever

- 17 June 2020

With Video Inference for Body Pose and Shape Estimation (VIBE), scientists at the Max Planck Institute for Intelligent Systems have developed a neural network that makes video-based 3D motion capture more accurate, faster, and less expensive.

Michael Black Nikos Athanasiou Muhammed Kocabas Valerie Callaghan

Michael J. Black awarded “test of time” prize at the 2020 Conference on Computer Vision and Pattern Recognition (CVPR)

- 16 June 2020

Black, a Director at the Max Planck Institute for Intelligent Systems (MPI-IS), has been awarded the Longuet-Higgins Prize.

The award honors research that has made a significant impact in the research field of computer vision.

Scientists in Tübingen develop 3D head model that can be used to design better-fitting protective gear

- 24 April 2020

The FLAME model makes it possible to create realistic 3D head shapes capable of accurately mimicking human facial expressions. It could help designers come up with more comfortable protective masks for faces of different shapes and sizes.

Andreas Geiger selected as Top 40 under 40

- 21 November 2019

Andreas Geiger has been selected as top 40 under 40 by the capital magazine.