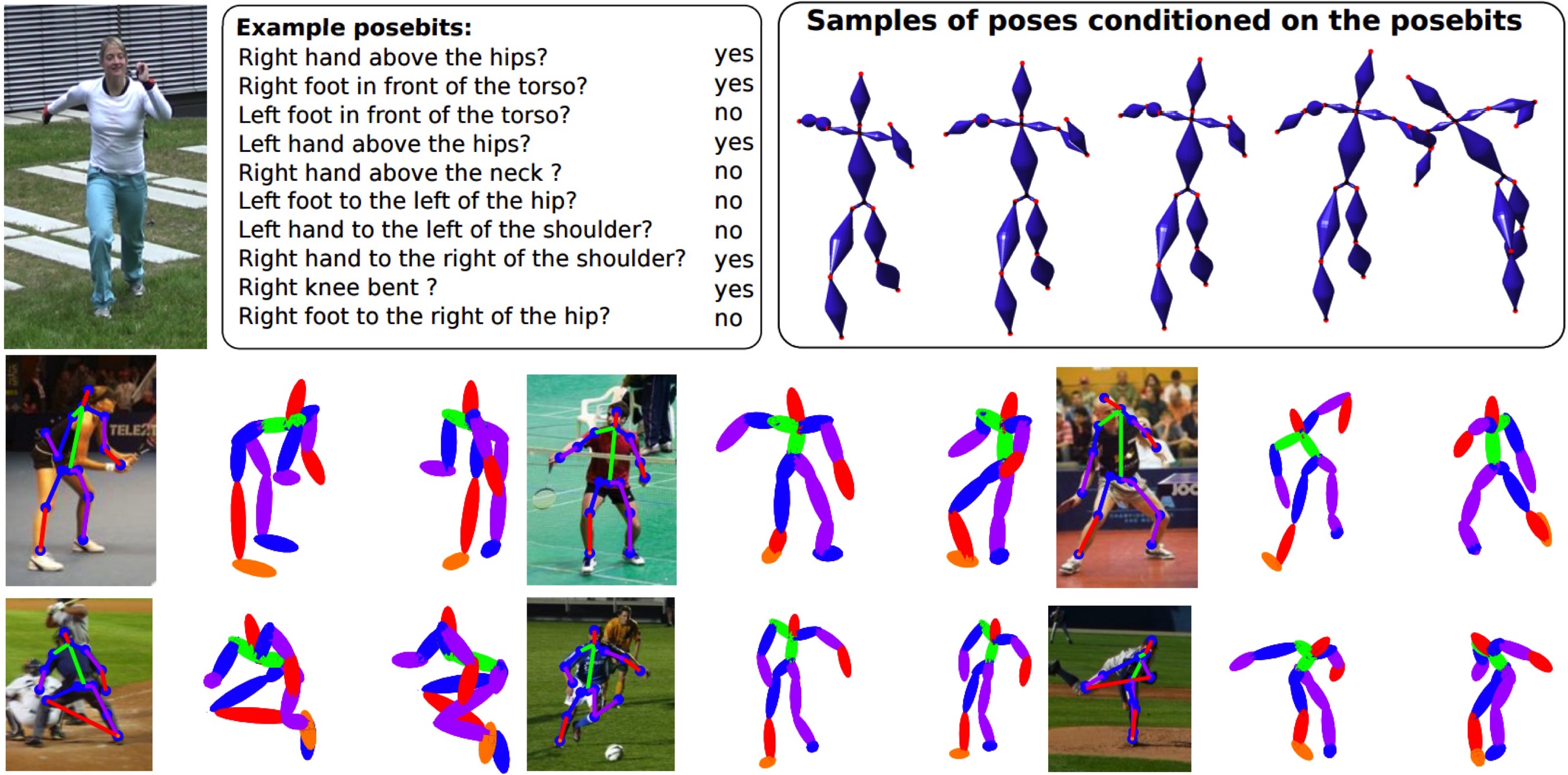

Top row: Posebits [ ] are semantic bits of information about the pose that can be inferred from image features using a trained classifier. Since posebits consist of simple yes/no questions, images can be easily annotated by humans. They may be useful for many tasks. (right) Samples of poses conditioned on the posebits depicted on the left. By conditioning the poses on posebits uncertainty about the pose is reduced. Bottom row: In [ ] we use a novel motion capture dataset to learn posed-dependent joint limits and use these to estimate 3D pose from 2D joint locations. Our new prior helps reduce the space of possible solutions to only valid 3D human poses.

The estimation of 3D human pose from 2D images is inherently ambiguous. To that end, we develop inference methods and human pose models that enable prediction of 3D pose from images. Learned models of human pose rely on training data but we find that existing motion capture datasets are too limited to explore the full range of human poses.

In [ ] we advocate the inference of qualitative information about 3D human pose, called posebits, from images. Posebits are relationships between body parts (e.g. left-leg in front of right-leg or hands close to each other). The advantages of posebits as a mid-level representation are 1) for many tasks of interest, such qualitative pose information may be sufficient (e.g. semantic image retrieval), 2) it is relatively easy to annotate large image corpora with posebits, as it simply requires answers to yes/no questions; and 3) they help resolve challenging pose ambiguities and therefore facilitate the difficult task of image-based 3D pose estimation. We introduce posebits, a posebit database, a method for selecting useful posebits for pose estimation and a structural SVM model for posebit inference.

In [ ] we make two key contributions to estimate 3D pose from 2D joint locations. First, we collect a dataset that includes a wide range of human poses that are designed to explore the limits of human pose. We notice that joint limits actually vary with pose and learn the first pose-dependent prior of joint limits. Second, we introduce a new method to infer 3D poses from 2D using the learned prior and an over-complete dictionary of poses. This results in state of the art results on estimating 3D pose from 2D pose.