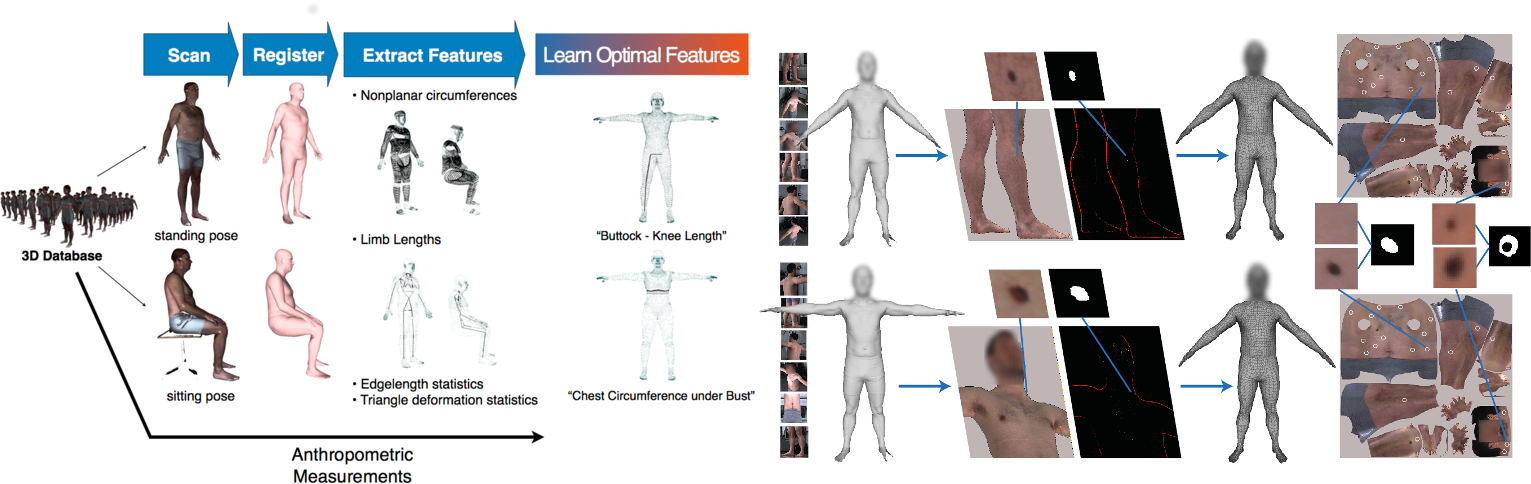

By leveraging a statistical human body model of shape and appearance, we propose innovative approaches to a variety of problems in medicine -- from extracting anthropometric measurements in ergonomics (left) to screening changes occurring in skin lesions in dermatology (right).

Accurate models of human body shape and appearance are powerful tools to tackle a variety of problems in medicine, ergonomics, fashion, fitness, etc.

In [ ], we propose a solution for extracting anthropometric or tailoring measurements from 3D human body scans. This is important in applications such as virtual try-on, custom clothing, online sizing. Existing commercial solutions identify anatomical landmarks on high-resolution 3D scans and then compute distances or circumferences on the scan. This process is sensitive to acquisition noise. In contrast, we propose a novel solution, model-based anthropometry. We fit a non-rigid 3D body model to scan data in multiple poses, thus obtaining a database of registered scans. Then, we extract features from these registrations, and learn a mapping from features to measurements using regularized linear regression.

In [ ] and [ ], we exploit both 3D shape and appearance information (i.e. surface color and texture). In [ ] we propose a fully automated pre-screening system for detecting new skin lesions or changes in existing ones over almost the entire body surface. This is crucial for early diagnosis and treatment of melanoma. Our solution is based on a multi-camera 3D stereo system. The system captures 3D textured scans of a subject at different times and then fit a non-rigid 3D body model to each scan. Skin textures are thus in accurate alignment across scans, facilitating the detection of new or changing lesions. On a pilot study, our approach proved able to detect changes as small as 2-3mm.