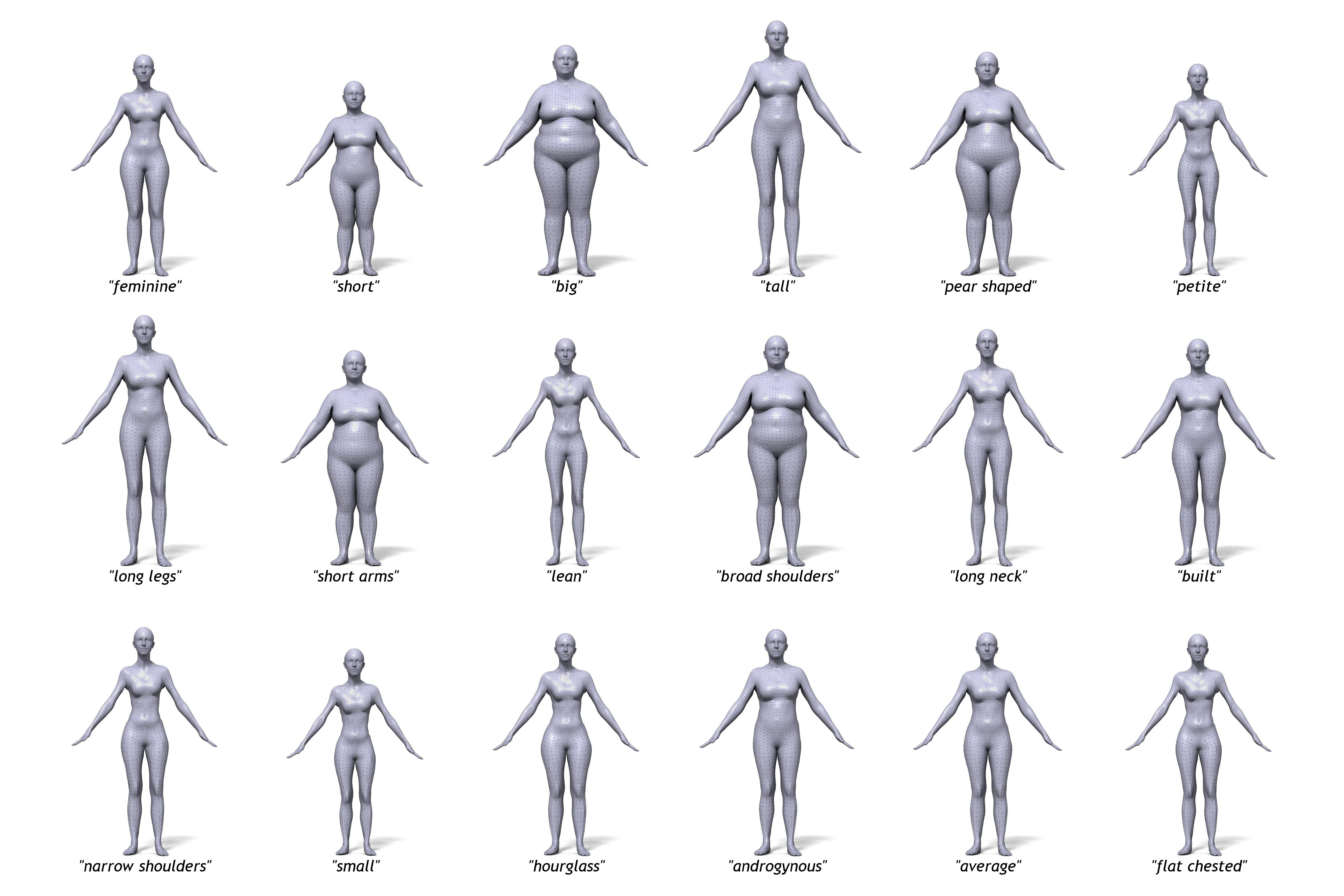

Prototypical body shapes. We generate random 3D body shapes, render them as images, and then crowdsource ratings of the images using words that describe shape. We learn a model of how 3D shape and linguistic descriptions of shape are related. Shown are the most likely body shapes, conditioned on the words below them. The ratings of “the crowd” suggest that we share an understanding of the 3D meaning of these shape attributes.

Our bodies say something about us. People perceive our shape and have names for body shapes like "hourglass" or "pear shaped" [ ]. People also make social judgments based on body shape. To understand this we have developed methods to relate linguistic descriptions to 3D body shape [ ]. This has provided insight into social biases [ ] and has resulted in new tools for social science.

We exploit the relationship between words and shape to create realistic, metrically accurate, 3D human avatars for games, shopping, virtual reality, and health applications. Such avatars are not in wide use because solutions for creating them from high-end scanners, low-cost range cameras, and tailoring measurements all have limitations. Here we propose a simple solution and show that it is surprisingly accurate. We use crowdsourcing to generate attribute ratings of 3D body shapes corresponding to standard linguistic descriptions of 3D shape. We then learn a linear function relating these ratings to 3D human shape parameters. Given an image of a new body, we again turn to the crowd for ratings of the body shape. The collection of linguistic ratings of a photograph provides remarkably strong constraints on the metric 3D shape. We call the process crowdshaping and show that our Body Talk system produces shapes that are perceptually indistinguishable from bodies created from high-resolution scans and that the metric accuracy is sufficient for many tasks. This makes body “scanning” practical without a scanner, opening up new applications including database search, visualization, and extracting avatars from books [ ].

An on-line application lets people create bodies and visualize how shape and language relate. Visit http://bodytalk.is.tue.mpg.de/